DeepMind RETRO

Improving language models by retrieving from trillions of tokens

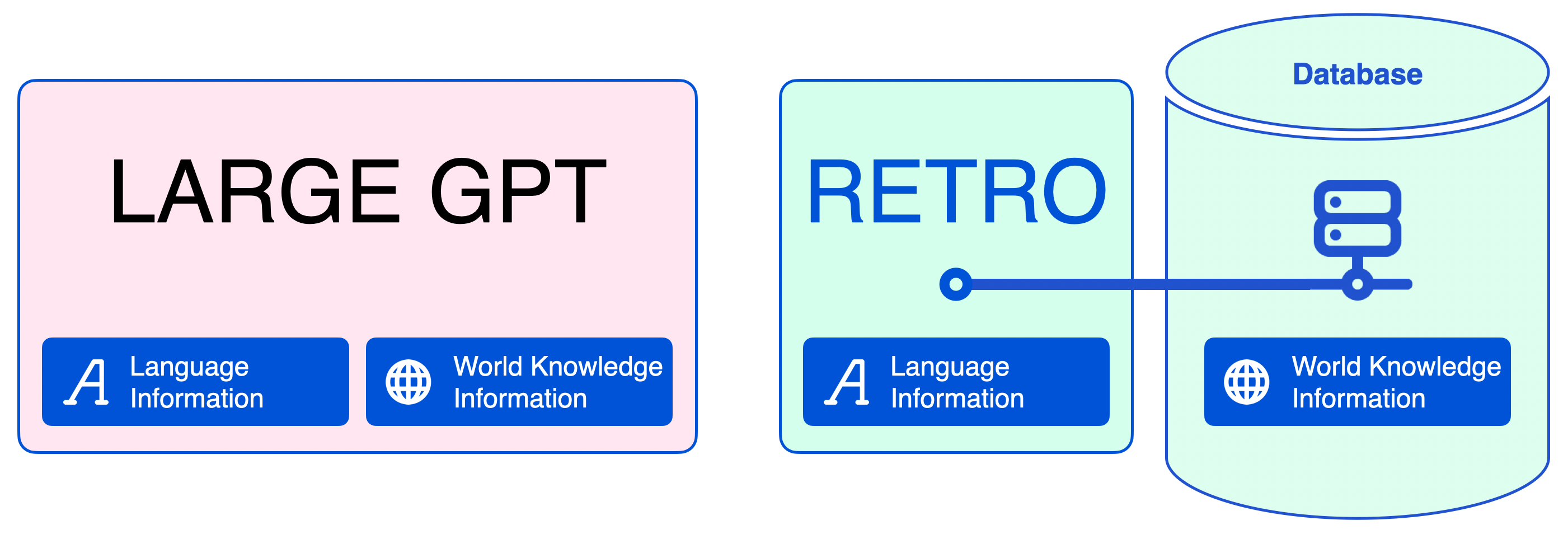

About DeepMind RETRO

DeepMind RETRO is a technique used to boost the effectiveness of auto-regressive language models by taking into account chunks of text from a large database of tokens - totaling 2 trillion - and recognizing any similarities with the words in the sentence that preceded them. This method, known as Retrieval-Enhanced Transformer (Retro), has been proven to achieve similar results to GPT-3 and Jurassic-1 on the Pile, despite using substantially fewer parameters. After being fine-tuned, Retro can be used to carry out complex tasks such as answering questions.

Source: https://www.deepmind.com/publications/improving-language-models-by-retrieving-from-trillions-of-tokens

DeepMind RETRO screenshots

EA Chat GPT-3

EA Chat GPT-3